Cybersecurity functions encricle the tertiary goals of confidentiality, integrity, and availability of data; thereupon, outlining the appropriate focal points to network security. I’ll go through many of the technologies and processes used to secure modern-day networks in no particular order. Please note, this isn’t a comprehensive list, but rather just SOME of the ways in which we can protect our networks.

Network Access Control

‘Network Access Control,” or “NAC,” is a principle superimposed on the network to facilitate proper access to the network itself and the resources contained on that network. Implementing NAC ensures there is no unauthorized access and, should a breach of the network occur, it is detected and resolved quickly.

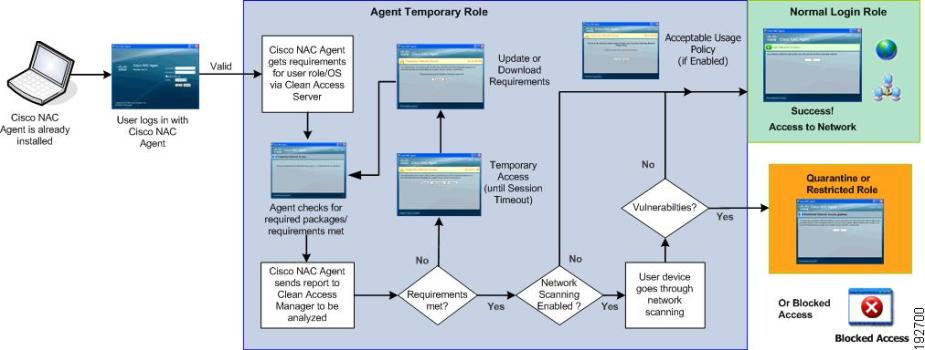

Cisco’s Posture Assessment for Network Admission Control. Reprinted from “Cisco NAC Appliance – Clean Access Manager Configuration Guide, Release 4.8(3),” by Cisco, 2017.

NAC could be something as simple as requiring a password to authenticate onto the network or something as robust as Cisco’s “Posture Assessment,” an available feature for Cisco’s network appliances. Whichever NAC implementation an organization chooses to use depends on the cost, feasibility, technical constraints, and security requirements.

Risk Assessments

The organization’s risk appetite must also be assessed by identifying and analyzing the current risks facing the network and the cost of recovering from attacks as a result of these risks. Organizations usually devise quantitative or qualitative risk assessments, which evaluates the risks involved for critical assets, non-critical assets, and processes for the organization. This gives organizations a good idea of the impact from the risks they face.

Risk assessments are also usually supplemented with a “Business Impact Analysis (BIA),” which identifies and prioritizes important business assets, processes, and activities according to their security objective (e.g., confidentiality, integrity, or availability) and potential impact (e.g., low, medium, or high).

The National Institute of Standards and Technology provides Special Publication 800-37, a “Guide for Applying the Risk Management Framework to Federal Information Systems.” Organizations who do not own or manage federal information systems may still follow the guidelines established in SP 800-37. This publication provides a life cycle approach and guidelines for applying an organization-wide Risk Management Framework (RMF).

Asset Inventory

A critical aspect of managing vulnerabilities is knowing what you must protect. Reasoning from this fact, it makes sense to identify all assets on the network. Seeing as this could be an exhaustive list, it’s better to draw a distinct line between critical and non-critical assets. Critical assets, which are mission-critical, naturally carry a higher priority than non-critical assets.

Some examples of assets to include are routers, switches, workstations, applications, DNS servers, Web servers, directory servers, E-mail servers, databases, storage devices, critical data, and WAPs. Keep in mind that critical assets can even include people and third parties crucial to the mission of the organization. Thus, the proper security controls must be implemented for each asset.

Reviews

Periodic control reviews will go a long way in ensuring the network is continuously secure by previously installed controls. For example, organizations can execute “technical control reviews,” which re-examine technical controls months after they’ve been implemented. As a good example, a firewall policy can be reviewed to make sure it’s still correctly filtering traffic and nothing has changed.

Likewise, an “operational control review” re-examines security policies, procedures, risk assessments, and current training. Reviews such as these will verify whether or not the operational controls are effective, being followed, and consistent with regulations and corporate policies. This sort of continuous monitoring assures an organization that the previously selected safeguards are still working as intended.

Identify Threats

Threat modeling will help identify current threats and vulnerabilities and define any countermeasures. Common threats facing modern networks tend to be malware, phishing, spam, session hijacking, XSS attacks, SQL injections, power failures, and natural disasters. If phishing e-mails are a recurring threat to an organization, for example, an appropriate solution would be user-awareness training whereby employees are educated on social engineering tactics. The preceding solution will likely prevent future phishing attempts.

Security Policies

Policies and procedures – every company, organization, agency, enterprise, or small business has to have these. Depending on the size and reach of the organization, there may be just a few important policies or hundreds of different policies, all relating to different aspects of security. Procedures are usually included in the policy. For example, an organization can have a Safety and Emergency policy and inside are the proper procedures for safe evacuation.

The most noteworthy policies tend to be the Acceptable Use Policy (AUP), the Network Access Policies (NAP), Password policies, and security awareness policies. A few honorable mentions would be the Business Continuity Planning (BCP) policies, Disaster Recovery Policies, Backup policy, Data Retention policy, Change Management Procedure policy, Remote Access policy, and the BYOD policy.

Incident Response

The Incident Response (IR) process was something I covered recently when summarizing some of the key elements of NIST SP 800-61 (revision 2). In a nutshell, the IR process assists organizations in responding efficiently and effectively to incidents both big and small. Every organization is going to experience an incident at one point, so being able to appropriately respond and analyze incident-related data to determine an appropriate response is crucial in a time where Incidence Response (IR) has become an important aspect of Information Technology.

SP 800-61 provides organizations with a way to develop incident handling policies, plans, procedures, teams, and recommendations. It also prepares organizations for the detection and analysis of cyber attacks as well as the containment, eradication, and recovery from cyber incidents.

Secure Network Architecture

There are many ways to design a network, but regardless of what it looks like, a network must be well defined; that is, the entry and exit points must be identified. These points are the common gateways for cyber-criminals; therefore, this layer should be properly secured. That means that the perimeter router should be protected by an external firewall. Equally important is that the router’s ACL should properly reflect the correct restrictions on network traffic.

In addition, public-facing assets, which are more-likely to be compromised because they connect directly to the Internet, should be placed in a “Demilitarized Zone (DMZ).” The DMZ is typically placed between two firewalls, but there are other design implementations. When a server in the DMZ is compromised, the attack is contained to the DMZ only, and not the internal network. Subnetting and VLANs can also serve this same security purpose.

IDS/IPS and Firewalls

An “Intrusion Detection System (IDS)” is a hardware appliance (sensors and management servers) or software that has the ability to detect signs of a threat through its database of broad rule sets and, accordingly, alerts security administrators, typically via an e-mail alert. An extension of intrusion detection is the process of intrusion prevention. In this instance, an “Intrusion Prevention System (IPS) not only detects these threats, but also prevents them, usually by modifying ACLs. There are many different types of IDS/IPS systems and their detection methodologies vary.

For instance, there are active and passive IDS systems, Network-based IDS/IPS systems, and host-based IDS/IPS systems. These systems may also be using either signature-based, anomaly-based, or heuristics-based detection technology. If you want to read more about IDS/IPS, I cover them here.

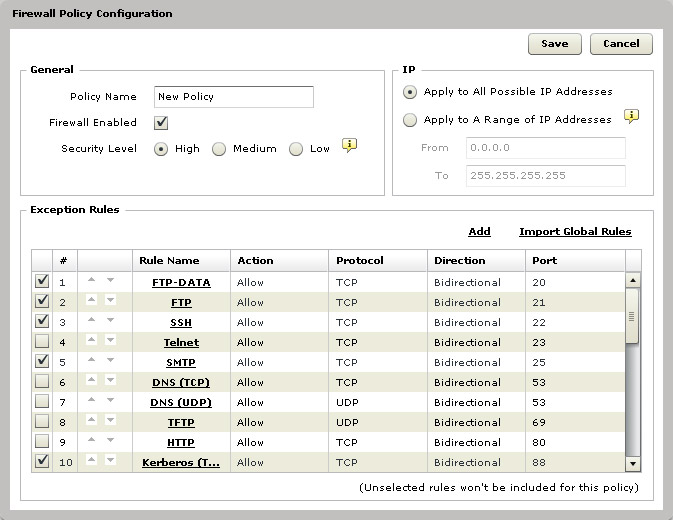

Firewalls are also either dedicated hardware appliances or software. Firewalls enforce a “firewall policy,” which is the same thing as an ACL or firewall ruleset. They’re commonly placed on the perimeter, but with the ever-evolving pace of technology, they too have evolved over the years. Firewalls used to be simple, stateless packet filters at layer 2 and 3 (Data Link and Network); however, modern firewalls come with new features, such as stateful analysis, deep packet inspection, and are even aware up to layer 7 (Application).

Firewall Policy in IBM Endpoint Manager. Reprinted from “Firewall Policy Configuration,” by IBM,

Configuring the firewalls rules and settings, firewalls will block unwanted traffic, filter inbound traffic and direct it to the correct internal sources, hide vulnerable nodes, and log traffic. Some great next-generation firewall vendors are Check Point, Cisco, and Palo Alto Networks. Firewalls are definitely a lengthy topic, but I digress.

NAT

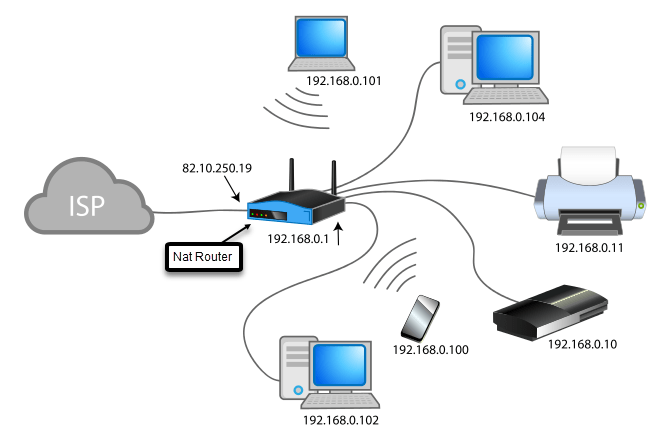

“Network Address Translation (NAT)” was intended to extend the life of IPv4 addresses, butit also came with an unplanned security benefit. Routers that run NAT effectively hide the IP addresses of internal nodes. A gateway router, for instance, might have a publicly routable IP address on its WAN connection, but a block of private IP addresses on its LAN connection. These private IP addresses are not accessible to anybody on the Internet. When a computer on the LAN wants to connect to a server out on the internet, however, the router translates the computer’s private source IP address into the router’s WAN interface address.

NAT. Reprinted from “What is Network Address Translation,” by the Geek Police, 2018

There are many different types of NAT, which I can go over here:

- Base NAT: This is what people are usually referring to when they discuss NAT. With Base NAT, the router translates an internal node’s private IP address into the router’s WAN public IP address. No system out on the Internet can connect to that internal node unless port forwarding is enabled on the router.

- PAT: This stands for Port Address Translation. PAT also goes by “Source NAT” or “Dynamic NAT Overloading.” PAT does the same thing as base NAT; however, the router also changes the internal node’s source port too, a process called port translation. The port can be changed to any unique port number (except the well-known ones). PAT only works for outbound communication.

- Destination NAT: Destination NAT is also called “Static NAT” or “Port Forwarding.” As you might have already guessed, Destination NAT works for inbound communication, which is good if you’re running an internal service that needs access to Internet users. For example, you could be running a Web server server on port 8080 on your home network. What Destination NAT does is it converts your public IP address to the internal, private IP address of your web server, allowing inbound communication to reach inside your network. This would never happen unless you configured your router to do so.

- Dynamic NAT: This is also called “Pooled NAT,” and you’ll see why in a moment. Organizations can buy a pool of purchased global IP addresses, which can be shared amongst the nodes on the internal network. If you have 5 internal computers with private IP addresses and 5 global IP addresses, then each computer can each get its own global IP address when they reach out to the Internet. If you have a 6th computer, it will have to wait its turn. Dynamic NAT does not perform port translation, but PAT does. To include port translation, you have to include PAT, which then becomes Dynamic PAT overloading.

Proxy Servers

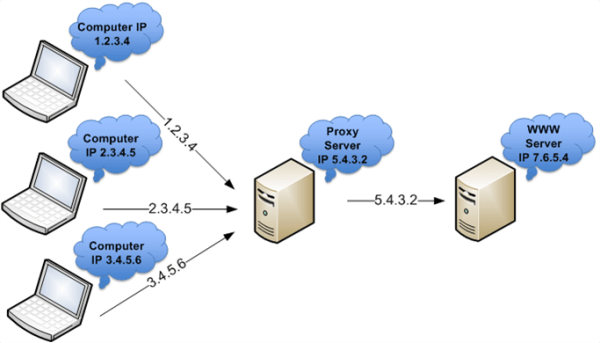

You can install an intermediary device between a source and destination, called a proxy server. The proxy server sits in the middle of the communication between an internal user and a server. When a user makes a request out to a Web server, the proxy server terminates the connection and makes the connection to the Web server on the user’s behalf. The purpose of this is access control. Proxy servers can control what users have access to via blacklisting known-bad or malicious web sites. They are also capable of content filtering, such as URL and E-mail filtering. Furthermore, the web server only knows that the proxy server is connecting to it, since they are also capable of NAT.

Basic Forward Proxy. Reprinted from “Few Features of Proxy Server,” by Host Dime Blog, 2015.)

If an organization is using a forward proxy on their network, then all internal user traffic will have to flow through the proxy. This may slow down network traffic, but if the proxy is capable of caching, then it definitely helps ease the load.

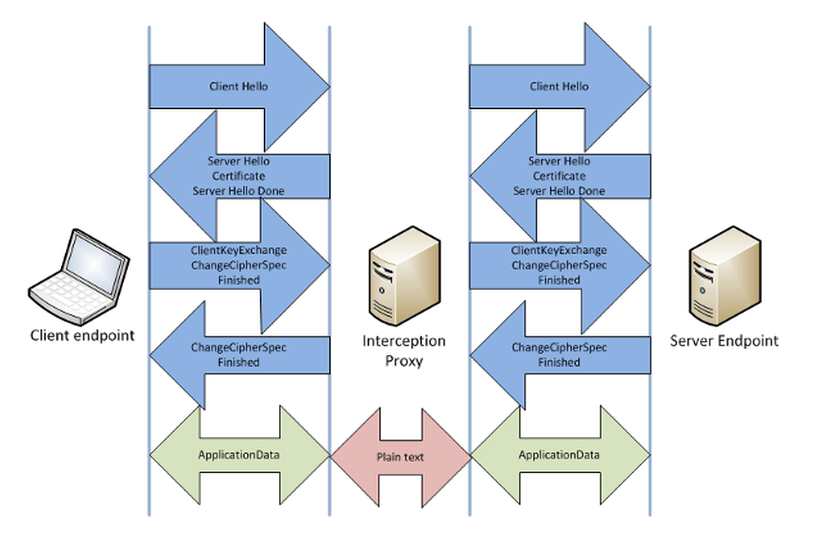

Some proxies just act purely for interception so that analysts can gain insight into user behavior. SSL proxies can “decrypt” HTTPS traffic too. This is a bit tricky because it requires a lot of configuration. Essentially, the proxy will have its own certificate. When the user requests access to a Web server via SSL/TLS, the proxy intercepts the communication and presents the user with its own certificate to secure the first half of the communication. The proxy then uses the Web server’s certificate to secure the other half of the communication. The problem is that the proxy’s certificate isn’t going to match the requested domain name, which will generate a certificate warning that the certificate is untrusted. This can be bypassed if all internal web browsers are configured to trust the proxy’s certificate.

SSL Proxy Configuration. Reprinted from “Interception SSL via Proxy Server,” by What is an SSL Certificate, 2016.

Hardening

This section pretty much speaks for itself. Hardening is the process of making a device more securer than its default configuration. Servers, for example, might come with default authentication credentials and numerous ports/services open and accessible. There are countless services available for a Web server, for example, but at a minimum, only TCP ports 80/443 are required to be open (exceptions obviously apply). Leaving unnecessary ports open on the Web server increases the attack vector whereby hackers can search for known vulnerabilities and exploit them.

Hardened. Reprinted from “System Hardening Services,” by Secure-Bytes, 2016.

Creating baselines also go a long way because when suspicious events do occur on a network (and they will), you’ll have a baseline to compare it to. Imagine all the applications running on a single workstation. It would be easy to identify the questionable application from the baseline.

Configuration baselines are also a must. During an incident response, its common to eradicate an incident by sanitizing a host and then reconstructing it to its pristine state. Without a configuration baseline, you’ll have to spend a significant amount of time manually rebuilding the host.

Monitoring Key Files

When detecting system intrusions, it’s beneficial to monitor key system files that would be of interest to an intruder. Therefore, some popular system files would be:

- /etc/passwd: stores user information in modern Linux environments

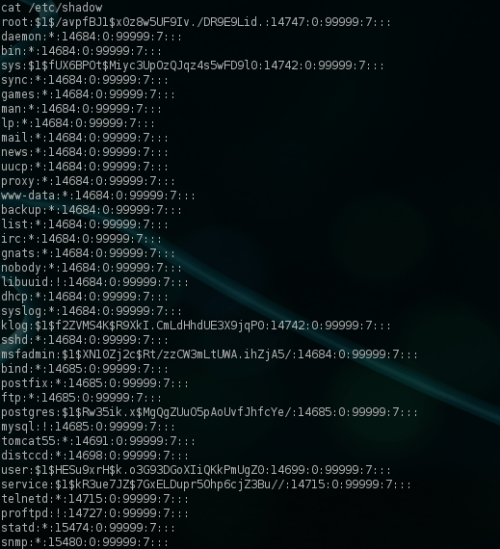

- /etc/shadow: stores the hash of the password in modern Linux environments

- Security Account Manager (SAM) file: the database of user passwords in Windows environments

- Windows Registry: A datastore where Windows systems store system-wide settings

- Dynamic Link Libraries (DLLs): Though the shared DLLs are also stored in the Windows Registry, they’re something of interest malware authors.

etc/shadow reveals hashed passwords. Reprinted from “Dumping And Cracking Unix Password Hashes,” by netbiosX, 2012.

In Windows systems, organizations can use “Object Access Auditing,” which is commonly used with Group Policy. With this feature, an audited file that is modified will generate an alert detailing the user responsible and a timestamp of when the change occurred. Another way to monitor key files is through “File Integrity Checking.” This is performed by taking a hash of the file, securing it in a secure location, and then re-hashing the file over time to see if anything has changed. Any modification to the file will generate a completely different hash. It’s best not to use the conventional MD5 and SHA-1 hashes anymore as they are vulnerable to collision attacks.

Continuous Monitoring and Analysis

Other than monitoring key system files, organizations should also analyze other security controls. This means examining and analyzing key sources of data, such as firewall logs, IDS/IPS alerts and logs, network device logs, NetFlow information, system resources, packet captures, and system logs. The amount of logs to analyze can be overwhelming, but log aggregation solutions, such as Security Information Event Management Systems (SIEMs), can assist administrators.

Many systems are capable of reporting their logs in a standardized syslog format to a syslog server over UDP/TCP port 514. The syslog server can then alert administrators to suspicious log activity and assign it a severity code, which is critical to good network security. Some good SIEMs are AlienVault’s USM and OSSIM, HP Enterprise’s ArcSight, IBM’s QRadar, and Splunk’s Security Intelligence Platform.

Conclusion

Again, this isn’t intended to be an all-inclusive list of network security controls, but it’s a good start for newcomers who are just stepping into the door of cybersecurity.

References

Kakareka, A. (2013). Chapter 3: Detecting System Intrusions. In Vacca, J. R., Computer and Information Security Handbook (2nd ed., pp. 47 – 62). Waltham, MA: Morgan Kaufmann Publishers

Maymi, F. J., Chapman, B. (2018). All-in-One CompTIA CSyA+ Cybersecurity Analyst Certification Exam Guide CS0-001. McGraw-Hill Education: New York, NY.

Meyers, M. (2015). All in One CompTIA Network+ Certification Exam N10-006. McGraw-Hill Education: New York, NY.

Pandya, P. (2013). Chapter 14: Local Area Network Security. In Vacca, J. R., Computer and Information Security Handbook (2nd ed., pp. 263 – 281). Waltham, MA: Morgan Kaufmann Publishers