Did you know click-bait can install malware onto your device? Access your webcam? Make you pay for services you aren’t aware of? If you want to learn how to better protect yourself, skip to the last section.

As a very sneaky Web-based attack, “click-jacking” is a common and revolutionized security threat. It’s also referred to as “click-bait.” Victims of click-jacking are tricked into clicking a button, which is a GUI element or object on a Webpage. This leads to an arbitrary and undesirable outcome. There are two preconditions attackers must meet to set up a click-jacking attack. First, the attacker embeds a malicious object inside a Web page and hides it under another legitimate element of that Web page.Many attackers, for example, prefer to hide a malicious object underneath the Facebook “like” button. The second precondition is that the attacker must entice a victim into clicking on the malicious element. Attackers typically do this with an alluring message.

Facebook Click-Bait:

These two figures portray a great example of a Facebook click-jacking attack.

Victims are lured into clicking the Facebook “like” button. The message and the video of the female in this scenario target mainly a male audience.

By prompting an age verification box, victims are further enticed into clicking the bait. Note: a Google search reveals that jaa means “yes” and peruuta means “cancel” in Finnish, suggesting that the attacker is from a Finnish-speaking country.

The two images above meet all the prerequisites for a click-jacking attack. As you see, users are tempted with an alluring message and a potentially erotic video, which “baits” the victim into clicking the like button. More likely than not, clicking the like button will redirect the user to the attacker’s Web site in which a number of things may happen as a result. One possible outcome, and what tends to be mostly common on Facebook, is that the victim will be led to a survey page requesting personal information from the victim. Then again, nothing could happen, and the attacker benefits from generating traffic to his/her Web site. And as cruel as it sounds, many victims may also be lead to a malicious Web site that automatically downloads malware onto their computer. These are called Drive-By Downloads (DBDs).

However, we don’t normally see these types of click-baits anymore. Or, at least I don’t. The one’s I do see happen to look more like the one below. I might see a Facebook friend who was tricked into clicking click-bait, like this one:

Many times, when you click on the click-bait, you will be directed to a security Capcha, like the one below:

By clicking “confirm,” you are actually sharing the click-bait onto your profile, which is what allows the click-bait to spread.

Sometimes, click-baits are hard to notice. For instance, take a look at the example shown below. It looks pretty trustworthy, mainly because it comes from an authoritative Web site, like YouTube.

But, it is very possible for attackers to spoof the URL in the HTML meta tags. Unfortunately, Facebook doesn’t check whether the the URL address tag is really the same as the Web page’s URL.

Facebook uses “Link Shim” to protect its users from malicious links and it does so by blacklisting known, malicious links. So, when you click on a link, Link Shim checks to see if that link is on its blacklist. The cool thing about Link Shim is that it uses machine-learning to identify links that its never even encountered yet. But, security Barak Tawily found a way to bypass Link Shim.

Other Click-Bait:

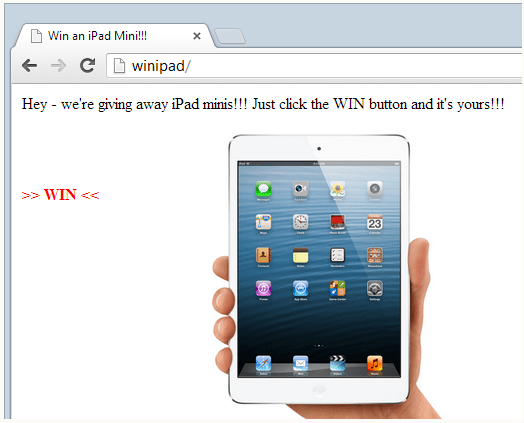

Click-Jacking is not limited to only Facebook users. Many times, click-jacking advertisements will pop up in a separate window and request a victim to claim a free prize. Click-Jacking can actually be implemented on almost any Web page and most are not as obvious as the examples above. Even computer-literate users with experience in security can fall for click-jacking attacks. The example below depicts a click-jack pop-up that should be familiar to most Web users.

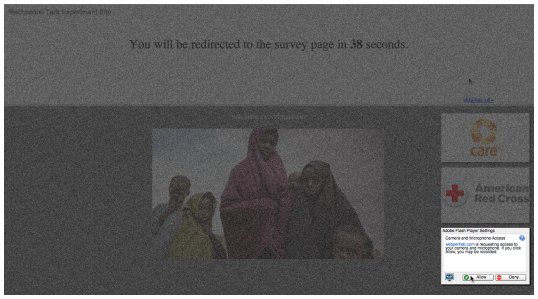

Victims will click on the “WIN” button thinking they have won an iPad mini, but what they actually clicked on is shown below. Recall that attackers will hide malicious objects underneath legitimate objects.

If this is raising any questions, then let me clarify any confusion as to what the attacker has cleverly accomplished. The attacker has actually embedded a link to the victim’s bank underneath the “WIN” button. For simplicity, assume that clicking the link will direct the victim to his/her banking Website, prompting the victim to sign in, and with one click, the victim’s bank transfers all his/her money to the attacker.

This image shows the invisible content of a click-jacking attack hidden underneath a user element.

Cursor Spoofing Attacks:

There are many other categories of click-jacking attacks as well. A “cursor-spoofing attack,” for example, provides a cunningly false location of the user’s actual cursor pointer. In a typical cursor-spoofing attack, a click-jacking pop-up is prompted on the user’s screen and a fake cursor will roll over an embedded element, such as a “skip this ad” button. An example of a cursor-spoofing attack is depicted here:

In this cursor-spoofing scenario, a fake cursor pops up and lures the victim into clicking the “skip this ad” button. But, if the user clicks the skip this ad button, then the click is actually landing on the “allow” button for the victim’s Adobe Flash Player settings, which grants the Web site permission to access the user’s Webcam. The best way to defend oneself against this attack is to disallow cursor customization on the Web browser

Protection From Click-Bait:

Computer-illiterate users may skip to the next session

To prevent Web browsers from popping up click-jack windows, some Web browsers can ask for confirmation whenever a user is redirected to a domain that is either in a whitelist or blacklist. This will give users the chance to deny click-jacking pop-ups. Typically, the Web browser will prompt a message saying something like, “the Web site you are trying to access is attempting to open up another window.” Users are then given the option to allow or deny.

Another preventative measure involves randomizing the graphical user interface elements or objects on Web pages. For example, Amazon.com can randomize where the “pay” button appears on its checkout Web page, therefore making it difficult for attackers to embed and hide malicious objects underneath the pay button.

An additional option would require Web browsers to enforce context integrity since victims are often misled to act out-of-context. Ensuring context integrity means a Web user is presented with everything he/she is supposed to see when clicking on a user interface element on a Web page. Web applications know which elements, such as PayPal’s “pay” button, are highly sensitive objects. Therefore, it should be the job of the Web site to determine which user interface elements are sensitive.

The subsequent job of the Web browser itself is then to enforce this context integrity. This can be accomplished in either one or two ways. First, the Web browser can inspect the CSS styles of graphical user interface elements. This means that Web browsers would check for the size, positioning, and the stack order of an element. The second approach is to have the Web site create a reference of the graphical user elements on Web pages, or a static bitmap reference, and then have the Web browser enforce context integrity by making sure the graphical user elements match this reference. If the Web browser’s bitmap does not match the Web site’s bitmap, then the user will not be able to click on any sensitive elements.

This design is referred to as “InContext Defense.” InContext Defense is complimented with screen-freezing and Web page muting. Since attackers like to include distractive animations and voice instructions to coerce victims into clicking a baited element, animations near a sensitive element and sounds/voices that initiates when a user interacts with a sensitive element are disabled. To further supplement InContext Defense, a “lightbox,” or an overlaying grey mask lights up around any sensitive element whenever the user’s cursor rolls over a sensitive user element. There is an example of a lightbox defense mechanism shown here:

A lightbox defense mechanism surrounds a sensitive element. This helps the user understand that this is a cursor-spoofing attack. The real cursor is hovering over the “skip this ad” button at the top-right of the screen. When the user rolls over this malicious element, the actual element lights up in the bottom-right, which helps the user understand that if he/she clicks the “skip this ad” button, he/she will give the Web site permission to access the user’s Webcam

Protecting Yourself from Click-Bait:

- Be vigilant about thumbnails and descriptions. They are usually made to entice you. They are usually provocative.

- If the link is available, look at the web address to see where it is directing you. If it seems suspicious, do NOT click it.

- Look who is messaging and sharing the click-bait with you. If it is not someone that you normally interact with, do NOT trust the link.

- Check to see if your Web browser offers any protection from click-bait

- Some Anti-Virus software have safe-surfing features. For example, Norton Security Suite for PC is a good anti-virus software that is free for anyone with an Xfinity account.

References

Brett, S., David, S., Rob, C., John, M., Kevin, A., & Christopher, K. (2010). VeriKey: A Dynamic Certificate Verification System for Public Key Exchanges. Retrieved from the University of California at Santa Barbara Web site: http://www.seclab.tuwien.ac.at/papers/dimva08.pdf

Chang, K. &Kang, G. S. (2010). Application Layer Intrusion Detection in MANETs. Proceedings of the 43rd Hawaii International Conference on System Sciences. Retrieved from the University of Michigan Web site: https://www.computer.org/csdl/proceedings/hicss/2010/3869/00/07-05-01.pdf

Chang-Kuo, T. (2009). A Browser-side Solution to Drive-by-Download-Based Malicious Web Pages. The NCU Institutional Repository. Retrieved from http://eds.a.ebscohost.com.ezproxy.umuc.edu/eds/detail/detail?vid=4&sid=3da93474-0d75-420a-badf-de12dc90a833%40sessionmgr4006&hid=4213&bdata=JnNpdGU9ZWRzLWxpdmUmc2NvcGU9c2l0ZQ%3d%3d#AN=edsbas.ftncuniv.oai.localhost.987654321.9762&db=edsbas

Cluley, G. (2012). Facebook Click-Jackers Said to Make Over $1 Million a Month, Agree to Stop Spam. Sophos Ltd. Retrieved from https://nakedsecurity.sophos.com/2012/05/08/facebook-clickjacking/

Collins, L. (n.d.). Chapter 61: Access Controls. In S. Bosworth, M. E. Kabay, and E. Whyne, Computer security handbook (6th ed., pp. 66.1 – 66.16) New York, NY: John Wiley and Sons.

Danilo, B., Lorenzo, C., & Andrea, L. (2013). An efficient technique for preventing mimicry and impossible paths execution attacks. Retrieved from the University of Milano, Italy Web site: http://eds.a.ebscohost.com.ezproxy.umuc.edu/eds/detail/detail?vid=9&sid=56fcbce7-f989-432f-a3e4-bfee415b054f%40sessionmgr4010&hid=4213&bdata=JnNpdGU9ZWRzLWxpdmUmc2NvcGU9c2l0ZQ%3d%3d#AN=edsbas.ftciteseerx.oai.CiteSeerX.psu.10.1.1.380.9009&db=edsbas

Dart, E., Rotman, L., Tierney, B., Hester, M., & Zurawski, J. (2014). The Science DMZ: A network design pattern for data-intensive science. Scientific Programming, 22(2), 173-185. doi:10.3233/SPR-140382. Retrieved from http://eds.b.ebscohost.com.ezproxy.umuc.edu/eds/pdfviewer/pdfviewer?sid=cc7b2d88-f38a-4c20-bd92-b0227b84c23e%40sessionmgr107&vid=12&hid=127

David, W., & Paolo, S. (2011). Mimicry Attacks on Host-Based Intrusion Detection Systems. Retrieved from the University of California at Berkeley Web site: http://eds.a.ebscohost.com.ezproxy.umuc.edu/eds/detail/detail?vid=7&sid=56fcbce7-f989-432f-a3e4-bfee415b054f%40sessionmgr4010&hid=4213&bdata=JnNpdGU9ZWRzLWxpdmUmc2NvcGU9c2l0ZQ%3d%3d#AN=edsbas.ftciteseerx.oai.CiteSeerX.psu.10.1.1.63.6038&db=edsbas

Goldman, J.E. (2006). Firewall Architecture. In H. Bidgoli (Ed.), Handbook of information security, volume 3. New York, NY: John Wiley & Sons, Inc. Retrieved from https://mbsdirect.vitalsource.com/#/books/978-0-47092-522-5/cfi/0!/4/2@100:0.00

Goodrich, M. T., & Tamassia, R. (2011) Introduction to Computer Security. Boston, MA: Pearson Education, Inc.

Haidong, X., & José Carlos, B. (2011). Hardening Web Browsers Against Man-in-the-Middle and Eavesdropping Attacks. Retrieved from the University of Pittsburgh Web site: http://people.cs.pitt.edu/~hdxia/papers/www2005_xia.pdf

Huang, L. Jackson, C., Moshchuk, A., Schechter, S., & Wang, H. J. (2012). Click-Jacking: Attack and Defenses. Usenix: The Advanced Computing Systems Association. Retrieved from https://www.usenix.org/system/files/conference/usenixsecurity12/sec12-final39.pdf

Hunt, T. (2013). Click-Jack Attack – The Hidden Threat Right in Front of You. Troy Hunt. Retrieved from https://nakedsecurity.sophos.com/2012/05/08/facebook-clickjacking/

Jiang, C., Venkatasubramanian, K. K., West, A. G., & Insup, L. (2013). Analyzing and Defending Against Web-Based Malware. ACM Computing Surveys, 4(5), 49-49:35. doi: 10.1145/2501654.2501663. Retrieved from http://eds.a.ebscohost.com.ezproxy.umuc.edu/eds/pdfviewer/pdfviewer?sid=f6e13910-efcc-4873-b762-e88c1178bd8a%40sessionmgr4009&vid=1&hid=4105

Kabay, M. E., & Kelley, S. (2013). Chapter 66: Developing Security Policies. In S. Bosworth, M. E. Kabay, and E. Whyne, Computer security handbook (6th ed., pp. 66.1 – 66.16) New York, NY: John Wiley and Sons.

Kirda, E., Jovanovic, N., Kruegel, C., & Giovanni V. (2009). Client-Side Cross-Site Scripting Protection. Computers & Security, 28 (7). Retrieved from http://www.sciencedirect.com.ezproxy.umuc.edu/science/article/pii/S0167404809000479

Kryukov, D. (2006). Necessity of New Layered Approach in Network Security. ICTE In Regional Development: 2006 Annual Proceedings, 127-129. Retrieved from http://eds.b.ebscohost.com.ezproxy.umuc.edu/eds/pdfviewer/pdfviewer?sid=cc7b2d88-f38a-4c20-bd92-b0227b84c23e%40sessionmgr107&vid=6&hid=127

Li, S., Xie, Y., Farajtabar, M., & Song, L. (2016). Detecting weak changes in dynamic events over networks. ArXiv. doi: 1603.08981. Retrieved from http://eds.a.ebscohost.com.ezproxy.umuc.edu/eds/detail/detail?vid=1&sid=0fd32233-d29c-4a1c-a2bc-246ecec458f1%40sessionmgr4010&hid=4213&bdata=JnNpdGU9ZWRzLWxpdmUmc2NvcGU9c2l0ZQ%3d%3d#AN=edsbas.ftarxivpreprints.oai.arXiv.org.1603.08981&db=edsbas

Nicoleta, S. (2013). Technologies, Methodologies and Challenges in Network Intrusion Detection and Prevention Systems. Informatica Economica, 17(1). doi: 10.12948. Retrieved from http://www.revistaie.ase.ro/content/65/12%20-%20stanciu.pdf

OWASP. (2016). The OWASP AppSensor Project. OWASP. Retrieved from https://www.owasp.org/index.php/Category:OWASP_AppSensor_Project

Pratik, U., Farhan, M., Acquin, D., & Nikita, D. (2013). Runtime Solution for Minimizing Drive-By-Download Attacks. The International Journal of Modern Engineering and Research, 3(2), p. 1019-1021. Retrieved from http://eds.a.ebscohost.com.ezproxy.umuc.edu/eds/detail/detail?vid=5&sid=3da93474-0d75-420a-badf-de12dc90a833%40sessionmgr4006&hid=4213&bdata=JnNpdGU9ZWRzLWxpdmUmc2NvcGU9c2l0ZQ%3d%3d#AN=edsbas.ftciteseerx.oai.CiteSeerX.psu.10.1.1.300.4059&db=edsbas

SANS Institute. (2013). Layered Security: Why it Works. SANS Institute. Retrieved from the SANS Institute Website: https://www.sans.org/reading-room/whitepapers/analyst/layered-security-works-34805

Saini, A., Gaur, M. S., Laxmi, V., & Conti, M. (2016). Colluding browser extension attack on user privacy and its implication for web browsers. Computers & Security, 6314-28. doi:10.1016/j.cose.2016.09.003. Retrieved from http://eds.a.ebscohost.com.ezproxy.umuc.edu/eds/detail/detail?vid=4&sid=017c5e76-fc84-4549-b155-48e44f27117e%40sessionmgr4010&hid=4213&bdata=JnNpdGU9ZWRzLWxpdmUmc2NvcGU9c2l0ZQ%3d%3d#AN=118966432&db=bth

Sanyal, S., Das, N., & Sarkar, T. (2015). Survey on Host and Network Based Intrusion Detection System. Acta Technica Corvininesis – Bulletin Of Engineering, 8(1), 17-20. Retrieved from http://eds.a.ebscohost.com.ezproxy.umuc.edu/eds/pdfviewer/pdfviewer?sid=56fcbce7-f989-432f-a3e4-bfee415b054f%40sessionmgr4010&vid=4&hid=4213

Scarfone, K., & Mell, P. (2007). Guide to Intrusion Detection and Prevention Systems (IDPS)). National Institute of Standards and Technology. Retrieved from http://nvlpubs.nist.gov/nistpubs/Legacy/SP/nistspecialpublication800-94.pdf

Shahriar, H., & Devendran, V. K. (2014). Classification of Clickjacking Attacks and Detection Techniques. Information Security Journal: A Global Perspective, 23(4-6), 137-147. doi:10.1080/19393555.2014.931489. Retrieved from http://eds.a.ebscohost.com.ezproxy.umuc.edu/eds/pdfviewer/pdfviewer?vid=1&sid=4f2a895d-8bd7-43af-9b8e-7840c1e86f70%40sessionmgr4010&hid=4213

Shittu, R., Healing, A., Ghanea-Hercock, R., Bloomfield, R., & Rajarajan, M. (2015). Intrusion alert prioritisation and attack detection using post-correlation analysis. Computers & Security, 50. 1-15. doi:10.1016/j.cose.2014.12.003. Retrieved from http://eds.a.ebscohost.com.ezproxy.umuc.edu/eds/detail/detail?sid=10ff707e-4cb3-4db8-bb9c-54233f64e157%40sessionmgr4009&vid=0&hid=4213&bdata=JnNpdGU9ZWRzLWxpdmUmc2NvcGU9c2l0ZQ%3d%3d#AN=S0167404814001837&db=edselp

Singh, G., Goyal, S., & Agarwal, R. (2015). Intrusion Detection Using Network Monitoring Tools. IUP Journal Of Computer Sciences, 9(4), 46-58. Retrieved from http://eds.b.ebscohost.com.ezproxy.umuc.edu/eds/pdfviewer/pdfviewer?sid=4cd62176-c5eb-45ec-bf55-4b3985a68835%40sessionmgr107&vid=3&hid=127

Snort Users Manual. (2015). The Snort Project. Retrieved from http://manual-snort-org.s3-website-us-east-1.amazonaws.com/

Software Security Solutions. (2016). Layered Security Solutions. Retrieved from the Software Security Solutions Web site: http://www.softwaresecuritysolutions.com/layered-security.html

Steinke, G., Tundrea, E., & Kelly, K. (2011). Towards an Understanding of Web Application Security Threats and Incidents. Journal of Information Privacy & Security, 7(4), 54-69. Retrieved from http://eds.a.ebscohost.com.ezproxy.umuc.edu/eds/pdfviewer/pdfviewer?sid=1e0ee587-390d-4d35-ad48-fe2889c938cd%40sessionmgr4007&vid=1&hid=4105

Tamir, D. (2014). Threat of the Month: Drive-By Downloads. Business Insights: Essentials. Retrieved from http://bi.galegroup.com.ezproxy.umuc.edu/essentials/article/GALE%7CA376644473/1835efce8cc5b482d954b8cacfebd4c1?u=umd_umuc

Trevor, J., Nikhil, S., & Michael, H. (2015). Defeating script injection attacks with browser-enforced embedded policies. Retrieved from the University of Maryland at College Park Web site: http://www.cs.umd.edu/~mwh/papers/jssecurity.pdf

University of Maryland University College. (2012a). Switching and Routing Vulnerabilities. [Online Module]. Retrieved from the University of Maryland University Website: https://leoprdws.umuc.edu/CSEC640/1206/csec640_03/assets/csec640_03.pdf

University of Maryland University College. (2012b). TCP/IP Vulnerabilities. [Online Module]. Retrieved from the University of Maryland University Website: https://leoprdws.umuc.edu/CSEC640/1206/csec640_04/assets/csec640_04.pdf

University of Maryland University College. (2012c). Network Security Architecture. [Online Module]. Retrieved from the University of Maryland University Web site: https://leoprdws.umuc.edu/CSEC640/1206/csec640_10/assets/csec640_10.pdf

[…] Web sites simply to increase traffic to their Web site. For example, I might include the link http://www.quickwayhibachi.com/, but it ACTUALLY navigates to one of my latest posts that discusses click-bait protection! Joe […]

LikeLiked by 1 person