No, I’m not referring to the Central Intelligence Agency, I’m referring to the three security principles: Confidentiality, Integrity, and Availability. In the cybersecurity world, these 3 principles are our core security goals.

Confidentiality

We keep sensitive and personal information “confidential,” that is, the confidentiality principle enshrouds itself in preventing the unauthorized disclosure of data. We can protect data confidentiality in several ways.

For one, we can use encryption to scramble sensitive data, making it unreadable by anyone who accesses it. Only those with the decryption key will be able to read the contents of the data. We use encryption in a number of areas, including our Web traffic, E-mails, storage, and any type of communication over the network we deem too sensitive for other’s to see.

We can also use access control to authorize only certain people to view data. Another important principle in cybersecurity is the Principle of Least Privileges, meaning we only grant users the rights and permissions needed to perform their job, and nothing more. For example, we would never grant a marketing employee access to the financial server. Unless the marketing employee needs access to the financial server, that server should remain accessible to the finance employees and the other employees who need it to do their job. We have numerous access control models that also implement this principle, such as Role-Based Access Control, Rule-Based Access Control, Discretionary Access Control, and Mandatory Access Control.

One final, and less likely way organizations keep their data confidential is through steganography. This is the practice of hiding data within data, typically image files like .jpeg files. People who choose to use steganography usually modify the bytes within an image file to transmit or store sensitive data in plain sight. Without steganalysis tools, it’s impossible to determine if an image file is holding sensitive information.

Integrity

When you receive an E-mail or a Web page, how can you be sure that the data in front of you has not been altered before it reached your computer? Integrity seeks to ensure that data has not been modified or changed in any way, whether in transit or stored. When we open a file, we want to be sure that the data was the same as we left it.

Data can be changed incidentally by human error, system errors, or authorized individuals, but it can also be changed by hackers and malware.

Hashing is one way to protect data integrity. By executing a hashing algorithm against a file, message, or password, we can create what’s called a “hash.” The hash is a small string of hexadecimal characters from A-F and 0-9. Depending on the hashing algorithm used, the hash is a fixed string of hexadecimal characters that won’t reveal the original size of the file, message, or password we hashed. let’s say, for instance, you hash a file. As long as the hash doesn’t change, we can be sure that the the file hasn’t been modified. Think of a hash as a fingerprint. If anyone were to modify the contents of the file, the hash would be completely different. The most common reasons we use hashing are for file integrity purposes and storing passwords in hashed-form. Popular hashing algorithms are MD5, and SHA-2, HMAC-MD5, and HMAC-SHA-2.

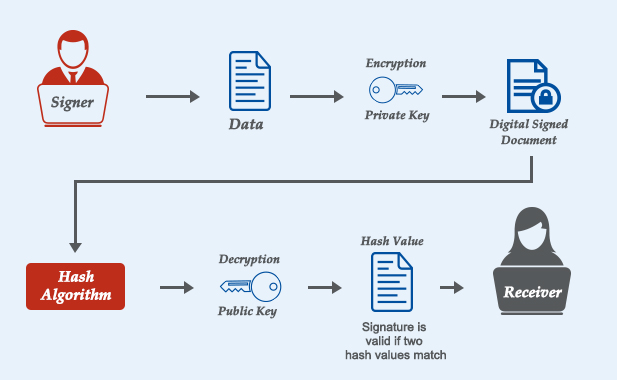

Digital signatures are another way we ensure data integrity. Digital signatures provide assurance that the message or electronic document we received came from the sender. Digital signatures provide what’s called “non-repudiation,” meaning that the sender cannot deny or refute sending the message. We use digital signatures to protect our e-mails. Digital signatures are computed through math. They are the hash of the sender’s message, which is then encrypted with the sender’s private key. This means that digital signatures require the use of certificates, which I’ll get into next. The recipient will then decrypt the hashed message using the sender’s public key in a digital certificate. The recipient will then hash the message to see if it matches. Note that the actual E-mail message is not encrypted, only the hash of the message.

Digital certificates allow us to communicate securely over the Internet using Public Key Infrastructure (PKI). In PKI, we are using a private key and a public to encrypt information. This can be confusing, so I’ll save it for another post. But, the process is ingenious. Without digital certificates, we would have no way of using digital signatures or encrypting http traffic.

Availability

The tertiary principle is availability, which ensures that data and services are available when needed. Generally, we like to have High Availability (HA) or high uptime. But, this will depend on the organization. Some organizations, like Google, need their Web servers available 24 hours a day, 7 days a week, and 365 days a year. Essentially, they require 99.999% uptime, which equates to only 5 minutes of down time per year!

We can keep availability high by using redundancy and fault tolerance. By redundancy, I mean using duplicate network infrastructure devices, such as switches, routers, and so on. If a router fails, it can failover to an active router. But, redundancy really shines in our server clusters. We can either keep passive servers on standby or just use multiple active servers. If one server were to fail for any reason, normal traffic would redirect to an additional server and normal network operations would resume. These server failover clusters eliminate any one single point of failure. Redundancy and fault tolerance come packaged together. If a system should suffer a fault, it will be able to tolerate it and continue to operate.

We use Redundant-Array of Inexpensive Disks (RAID) mostly in our servers to have additional HDDs just in case one disk fails. Any system has 4 resources: processor (CPU), memory, disk, and the network interface. Of these, the disk is the slowest and most susceptible to failure. RAID will provide fault tolerance to increase the system’s availability. We typically use RAID-5, RAID-6, or RAID-10 for our servers.

Load balancers are devices used in server farms that assist in evenly distributing igress traffic to our servers. This makes sure one server isn’t getting too much load, and therefore helps keep a high uptime.

Site redundancies are another way to keep availability high. Imagine your organization handles hundreds of servers vital to business operations. If your organization suffered from an electrical outage or a flood, it would be extremely helpful to your customers if you had a redundant backup site where you could resume normal business operations within the hour.

Speaking of backups, we must backup most, if not all of our data. Should we lose our data from a power outage or any other particular reason, we can restore it from the last backup tape!

With all this talk about power outages, we can also keep availability high using power generators and Uninterruptible Power Supply (UPS) systems. A UPS is a system that we connect our computers to. The UPS itself is connected to an electrical outlet that keeps it charged with additional power. Should an organization lose commercial power, the UPS automatically keeps our computers on for about 10-15 minutes. This is gives us more than enough time to save our data and correctly shut off our computers.

Our HVAC systems are one of the last ways we can keep availability high. HVAC stands for Heating, Ventilation, and Cooling. HVAC really shines in our database centers and server farms. These database servers are very sensitive and require an optimal temperature and humidity to operate. Essentially, HVAC systems provide these systems a healthy environment to function.

You might not think so, but patching is an often overlooked method of keeping availability high. Regularly installing and testing patches may affect network and system performance, but in the long run, it keeps our systems running longer.

References

Gibson, D. (2017). CompTIA SECURITY+ Get Certified Get Ahead SY0401 Study Guide. Virginia Beach, VA: YCDA, LLC